Google and Character.AI have agreed to settle a number of lawsuits filed by households whose kids died by suicide or skilled psychological hurt allegedly linked to AI chatbots hosted on Character.AI’s platform, in line with courtroom filings. The 2 firms have agreed to a “settlement in principle,” however particular particulars haven’t been disclosed, and no admission of legal responsibility seems within the filings.

The authorized claims included negligence, wrongful dying, misleading commerce practices, and product legal responsibility. The primary case filed towards the tech firms involved a 14-year-old boy, Sewell Setzer III, who engaged in sexualized conversations with a Sport of Thrones chatbot earlier than he died by suicide. One other case concerned a 17-year-old whose chatbot allegedly inspired self-harm and steered murdering dad and mom was an affordable option to retaliate towards them for limiting display screen time. The circumstances contain households from a number of states, together with Colorado, Texas, and New York.

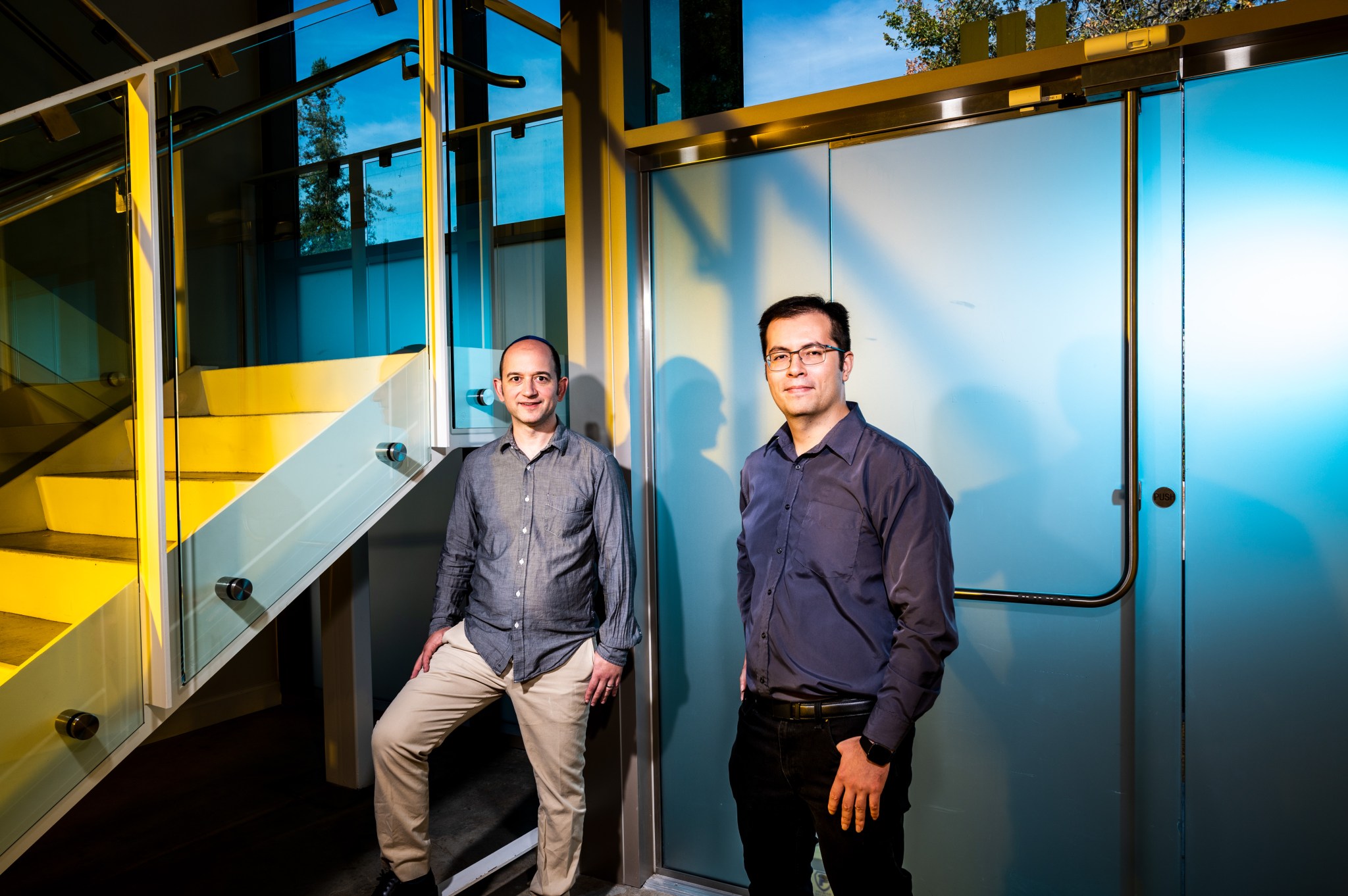

Based in 2021 by former Google engineers Noam Shazeer and Daniel De Freitas, Character.AI allows customers to create and work together with AI-powered chatbots primarily based on real-life or fictional characters. In August 2024, Google re-hired each founders and licensed a few of Character.AI’s expertise as a part of a $2.7 billion deal. Shazeer now serves as co-lead for Google’s flagship AI mannequin Gemini, whereas De Freitas is a analysis scientist at Google DeepMind.

Attorneys have argued that Google bears accountability for the expertise that allegedly contributed to the dying and psychological hurt of the youngsters concerned within the circumstances. They declare Character.AI’s co-founders developed the underlying expertise whereas engaged on Google’s conversational AI mannequin, LaMDA, earlier than leaving the corporate in 2021 after Google refused to launch a chatbot they’d developed.

Google didn’t instantly reply to a request for remark from Fortune regarding the settlement. Attorneys for the households and Character.AI declined to remark.

Related circumstances are at the moment ongoing towards OpenAI, together with lawsuits involving a 16-year-old California boy whose household claims ChatGPT acted as a “suicide coach,” and a 23-year-old Texas graduate pupil who allegedly was goaded by the chatbot to disregard his household earlier than dying by suicide. OpenAI has denied the corporate’s merchandise had been accountable for the dying of the 16-year-old, Adam Raine, and beforehand mentioned the corporate was persevering with to work with psychological well being professionals to strengthen protections in its chatbot.

Character.AI bans minors

Character.AI has already modified its product in methods it says enhance its security, and which can additionally defend it from additional authorized motion. In October 2025, amid mounting lawsuits, the corporate introduced it will ban customers below 18 from partaking in “open-ended” chats with its AI personas. The platform additionally launched a brand new age-verification system to group customers into acceptable age brackets.

The choice got here amid growing regulatory scrutiny, together with an FTC probe into how chatbots have an effect on kids and youngsters.

The corporate mentioned the transfer set “a precedent that prioritizes teen safety,” and goes additional than rivals in defending minors. Nevertheless, legal professionals representing households suing the corporate instructed Fortune on the time they’d issues about how the coverage can be applied and raised issues concerning the psychological influence of all of the sudden reducing off entry for younger customers who had developed emotional dependencies on the chatbots.

Rising reliance on AI companions

The settlements come at a time when there’s a rising concern about younger folks’s reliance on AI chatbots for companionship and emotional assist.

A July 2025 research by the U.S. nonprofit Widespread Sense Media discovered that 72% of American teenagers have experimented with AI companions, with over half utilizing them commonly. Consultants beforehand instructed Fortune that growing minds could also be notably weak to the dangers posed by these applied sciences, each as a result of teenagers could battle to know the constraints of AI chatbots and since charges of psychological well being points and isolation amongst younger folks have risen dramatically in recent times.

Some consultants have additionally argued that the fundamental design options of AI chatbots—together with their anthropomorphic nature, potential to carry lengthy conversations, and tendency to recollect private info—encourage customers to type emotional bonds with the software program.